Pd Read Parquet

Pd Read Parquet - From pyspark.sql import sqlcontext sqlcontext = sqlcontext (sc) sqlcontext.read.parquet (my_file.parquet… This function writes the dataframe as a parquet. Web pandas 0.21 introduces new functions for parquet: Web the data is available as parquet files. These engines are very similar and should read/write nearly identical parquet. Web pandas.read_parquet(path, engine='auto', columns=none, storage_options=none, use_nullable_dtypes=_nodefault.no_default, dtype_backend=_nodefault.no_default, **kwargs) [source] #. Write a dataframe to the binary parquet format. Any) → pyspark.pandas.frame.dataframe [source] ¶. You need to create an instance of sqlcontext first. Web pandas.read_parquet(path, engine='auto', columns=none, storage_options=none, use_nullable_dtypes=_nodefault.no_default, dtype_backend=_nodefault.no_default, filesystem=none, filters=none, **kwargs) [source] #.

These engines are very similar and should read/write nearly identical parquet. This will work from pyspark shell: Is there a way to read parquet files from dir1_2 and dir2_1. Any) → pyspark.pandas.frame.dataframe [source] ¶. Web pandas.read_parquet(path, engine='auto', columns=none, storage_options=none, use_nullable_dtypes=_nodefault.no_default, dtype_backend=_nodefault.no_default, **kwargs) [source] #. Web pandas.read_parquet(path, engine='auto', columns=none, storage_options=none, use_nullable_dtypes=_nodefault.no_default, dtype_backend=_nodefault.no_default, filesystem=none, filters=none, **kwargs) [source] #. For testing purposes, i'm trying to read a generated file with pd.read_parquet. Web the data is available as parquet files. Web reading parquet to pandas filenotfounderror ask question asked 1 year, 2 months ago modified 1 year, 2 months ago viewed 2k times 2 i have code as below and it runs fine. Web 1 i've just updated all my conda environments (pandas 1.4.1) and i'm facing a problem with pandas read_parquet function.

Connect and share knowledge within a single location that is structured and easy to search. A years' worth of data is about 4 gb in size. Right now i'm reading each dir and merging dataframes using unionall. Df = spark.read.format(parquet).load('parquet</strong> file>') or. Web to read parquet format file in azure databricks notebook, you should directly use the class pyspark.sql.dataframereader to do that to load data as a pyspark dataframe, not use pandas. Web dataframe.to_parquet(path=none, engine='auto', compression='snappy', index=none, partition_cols=none, storage_options=none, **kwargs) [source] #. Write a dataframe to the binary parquet format. Import pandas as pd pd.read_parquet('example_pa.parquet', engine='pyarrow') or. Parquet_file = r'f:\python scripts\my_file.parquet' file= pd.read_parquet (path = parquet… Web pandas.read_parquet(path, engine='auto', columns=none, storage_options=none, use_nullable_dtypes=_nodefault.no_default, dtype_backend=_nodefault.no_default, **kwargs) [source] #.

PySpark read parquet Learn the use of READ PARQUET in PySpark

I get a really strange error that asks for a schema: Any) → pyspark.pandas.frame.dataframe [source] ¶. Web sqlcontext.read.parquet (dir1) reads parquet files from dir1_1 and dir1_2. Df = spark.read.format(parquet).load('parquet</strong> file>') or. Web 1 i'm working on an app that is writing parquet files.

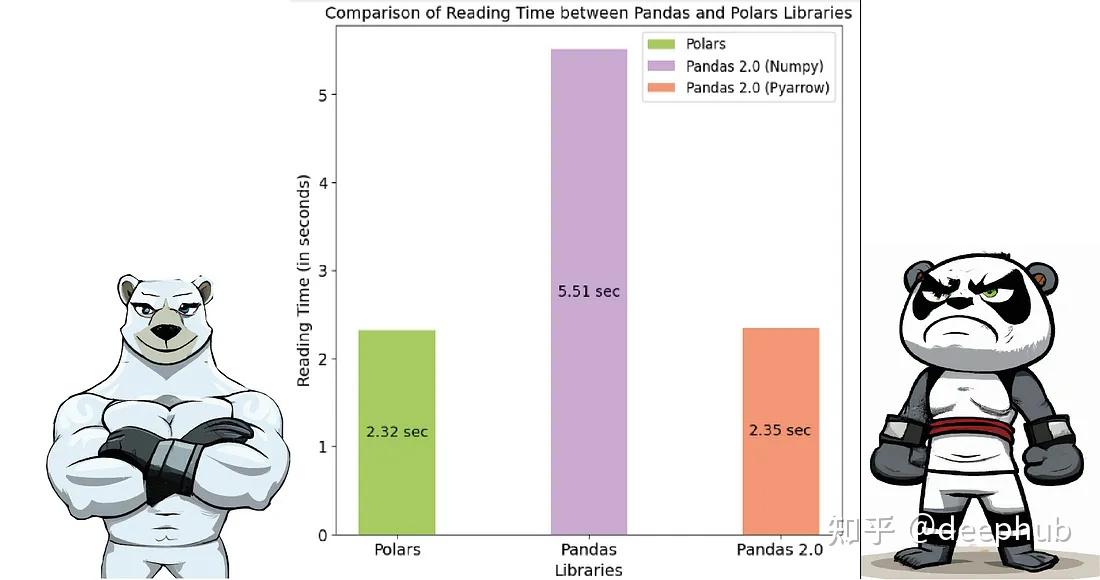

Pandas 2.0 vs Polars速度的全面对比 知乎

Web the us department of justice is investigating whether the kansas city police department in missouri engaged in a pattern of racial discrimination against black officers, according to a letter sent. Web pandas 0.21 introduces new functions for parquet: I get a really strange error that asks for a schema: Connect and share knowledge within a single location that is.

Modin ray shows error on pd.read_parquet · Issue 3333 · modinproject

Is there a way to read parquet files from dir1_2 and dir2_1. Write a dataframe to the binary parquet format. This will work from pyspark shell: Parquet_file = r'f:\python scripts\my_file.parquet' file= pd.read_parquet (path = parquet… A years' worth of data is about 4 gb in size.

How to read parquet files directly from azure datalake without spark?

Web dataframe.to_parquet(path=none, engine='auto', compression='snappy', index=none, partition_cols=none, storage_options=none, **kwargs) [source] #. Web pandas.read_parquet(path, engine='auto', columns=none, storage_options=none, use_nullable_dtypes=_nodefault.no_default, dtype_backend=_nodefault.no_default, **kwargs) [source] #. This will work from pyspark shell: Is there a way to read parquet files from dir1_2 and dir2_1. Import pandas as pd pd.read_parquet('example_fp.parquet', engine='fastparquet') the above link explains:

python Pandas read_parquet partially parses binary column Stack

Web the data is available as parquet files. Df = spark.read.format(parquet).load('parquet</strong> file>') or. Connect and share knowledge within a single location that is structured and easy to search. This will work from pyspark shell: You need to create an instance of sqlcontext first.

Parquet from plank to 3strip from MEISTER

Is there a way to read parquet files from dir1_2 and dir2_1. You need to create an instance of sqlcontext first. Web 1 i've just updated all my conda environments (pandas 1.4.1) and i'm facing a problem with pandas read_parquet function. Right now i'm reading each dir and merging dataframes using unionall. For testing purposes, i'm trying to read a.

Spark Scala 3. Read Parquet files in spark using scala YouTube

Web the data is available as parquet files. I get a really strange error that asks for a schema: Write a dataframe to the binary parquet format. This function writes the dataframe as a parquet. Connect and share knowledge within a single location that is structured and easy to search.

pd.read_parquet Read Parquet Files in Pandas • datagy

Any) → pyspark.pandas.frame.dataframe [source] ¶. Web pandas.read_parquet(path, engine='auto', columns=none, storage_options=none, use_nullable_dtypes=_nodefault.no_default, dtype_backend=_nodefault.no_default, filesystem=none, filters=none, **kwargs) [source] #. Web to read parquet format file in azure databricks notebook, you should directly use the class pyspark.sql.dataframereader to do that to load data as a pyspark dataframe, not use pandas. Import pandas as pd pd.read_parquet('example_fp.parquet', engine='fastparquet') the above link explains: Web reading parquet.

How to resolve Parquet File issue

These engines are very similar and should read/write nearly identical parquet. Df = spark.read.format(parquet).load('parquet</strong> file>') or. Web sqlcontext.read.parquet (dir1) reads parquet files from dir1_1 and dir1_2. Web 1 i've just updated all my conda environments (pandas 1.4.1) and i'm facing a problem with pandas read_parquet function. Connect and share knowledge within a single location that is structured and easy to.

Parquet Flooring How To Install Parquet Floors In Your Home

Connect and share knowledge within a single location that is structured and easy to search. Web 1 i'm working on an app that is writing parquet files. Import pandas as pd pd.read_parquet('example_pa.parquet', engine='pyarrow') or. Import pandas as pd pd.read_parquet('example_fp.parquet', engine='fastparquet') the above link explains: Web pandas.read_parquet(path, engine='auto', columns=none, storage_options=none, use_nullable_dtypes=_nodefault.no_default, dtype_backend=_nodefault.no_default, **kwargs) [source] #.

Web 1 I'm Working On An App That Is Writing Parquet Files.

Parquet_file = r'f:\python scripts\my_file.parquet' file= pd.read_parquet (path = parquet… Df = spark.read.format(parquet).load('parquet</strong> file>') or. Web 1 i've just updated all my conda environments (pandas 1.4.1) and i'm facing a problem with pandas read_parquet function. Is there a way to read parquet files from dir1_2 and dir2_1.

For Testing Purposes, I'm Trying To Read A Generated File With Pd.read_Parquet.

It reads as a spark dataframe april_data = sc.read.parquet ('somepath/data.parquet… Web reading parquet to pandas filenotfounderror ask question asked 1 year, 2 months ago modified 1 year, 2 months ago viewed 2k times 2 i have code as below and it runs fine. Import pandas as pd pd.read_parquet('example_pa.parquet', engine='pyarrow') or. Connect and share knowledge within a single location that is structured and easy to search.

Right Now I'm Reading Each Dir And Merging Dataframes Using Unionall.

Web pandas.read_parquet(path, engine='auto', columns=none, storage_options=none, use_nullable_dtypes=_nodefault.no_default, dtype_backend=_nodefault.no_default, filesystem=none, filters=none, **kwargs) [source] #. Any) → pyspark.pandas.frame.dataframe [source] ¶. This will work from pyspark shell: Web dataframe.to_parquet(path=none, engine='auto', compression='snappy', index=none, partition_cols=none, storage_options=none, **kwargs) [source] #.

Import Pandas As Pd Pd.read_Parquet('Example_Fp.parquet', Engine='Fastparquet') The Above Link Explains:

I get a really strange error that asks for a schema: Any) → pyspark.pandas.frame.dataframe [source] ¶. Web pandas.read_parquet(path, engine='auto', columns=none, storage_options=none, use_nullable_dtypes=_nodefault.no_default, dtype_backend=_nodefault.no_default, **kwargs) [source] #. Web pandas 0.21 introduces new functions for parquet: